There is a phase in every IoT project that rarely appears in proposal decks, architecture diagrams, or demo videos.

It does not occur when the dashboard first starts.

It does not happen when the data flows beautifully during a presentation.

It does not happen when stakeholders nod and say, “This looks good.”

It happens later.

Often after deployment.

Often, when the system is already in use.

Often, when someone depends on the data.

And the signal is simple.

“No data received.”

I have seen this moment across universities, startups, commercial deployments, and public-sector systems. The context changes, but the reaction is always the same. A sudden pause. A quiet tension. A realisation that IoT is no longer an experiment.

This is where IoT moves from being interesting to being operational.

The False Sense of Completion

One of the most common assumptions in IoT projects is that deployment marks the end of the work.

Devices are installed.

Connectivity is configured.

Dashboards are live.

From the outside, it looks complete.

In reality, deployment is only the beginning of responsibility.

IoT systems are not static assets. They rely on multiple moving parts working together every day. Networks change. Power conditions vary. Firmware evolves. Physical environments behave unpredictably. Human decisions, especially changes made by different teams, affect systems in ways that dashboards never show in advance.

A live dashboard proves that data once flowed. It does not guarantee that data will keep flowing.

Understanding Silence Before Assigning Blame

When data stops, the first instinct is often to blame the platform.

This reaction is understandable. Platforms sit at the centre of the system. They are visible. They feel like the obvious suspect.

Operational experience teaches a calmer approach.

If a platform were truly down, the impact would be wide and immediate. Multiple devices would stop reporting simultaneously. Dashboards across the board would show gaps. Alerts would trigger everywhere.

When only a subset of devices goes silent, the cause is almost always local.

This distinction matters. It saves time, reduces frustration, and prevents unnecessary escalation.

Troubleshooting starts with narrowing the scope, not raising the volume.

Connectivity Is Fragile by Nature

Connectivity issues remain the most frequent cause of data interruptions.

In Wi-Fi deployments, a simple network change can break an entire system. An SSID rename, a password update, or an access point replacement can permanently disconnect sensors. These changes are rarely malicious. They are part of normal IT operations. The problem is that IoT devices are often invisible in those decisions.

Cellular systems face a different set of risks. Coverage shifts. Base stations go offline. Buildings interfere with signals. What worked yesterday may not work tomorrow.

Connectivity should never be assumed. It should be verified early, monitored continuously, and revisited whenever silence appears.

Power Determines Survival

Power is often treated as a supporting detail. In reality, it determines whether an IoT system lives or quietly disappears.

Battery-powered devices fade rather than fail. Data frequency drops. Transmission becomes intermittent. Eventually, the system stops reporting without warning.

Solar-powered systems introduce another layer of complexity. Weather patterns, installation angles, and energy storage capacity all influence performance. A design that works in theory can struggle in prolonged cloudy conditions.

Every remote IoT deployment should treat energy monitoring as a core metric. Battery level, charging behaviour, and power trends provide early signals long before data disappears.

Ignoring power is not a technical oversight. It is an operational risk.

Hardware Exists Outside the Cloud

IoT systems do not live in controlled environments.

They face heat, moisture, dust, vibration, and electrical surges. Lightning strikes damage boards. Water seeps into enclosures. Connectors loosen. Components age.

When hardware fails, software has no way to compensate.

This reality reinforces the importance of installation quality, enclosure design, grounding, and physical inspection schedules. Hardware resilience is not a luxury. It is part of system reliability.

Firmware Discipline Matters

Firmware rarely attracts attention until it causes problems.

Unplanned updates can break connectivity. Delayed updates leave vulnerabilities unaddressed. Inconsistent versions across devices complicate diagnosis.

Remote deployments require structured firmware management. Teams need visibility into device versions, update status, and recovery options. Over-the-air updates reduce operational friction, but only when combined with proper controls and rollback strategies.

Without discipline, scaling IoT systems increases anxiety rather than confidence.

Seeing the Whole System

Effective IoT operations demand an end-to-end view.

Applications, devices, networks, power sources, and physical environments must be understood as one connected system. Failures rarely occur in isolation. They cascade across layers.

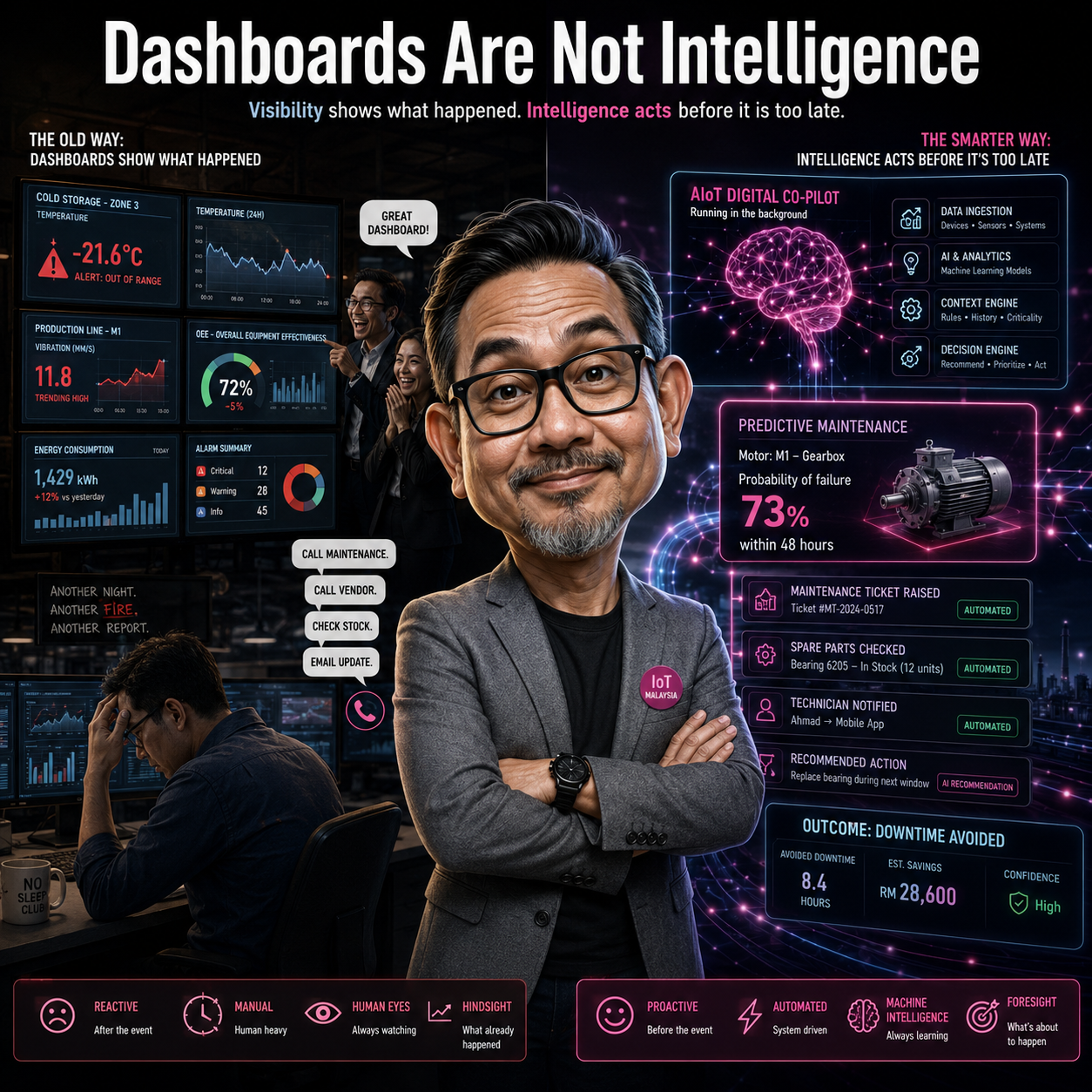

This is where platforms play their most important role. Not as dashboard builders, but as system observers. A strong platform helps teams correlate events, isolate failure points, and respond methodically rather than emotionally.

Visibility enables calm decision-making. Calm decision-making protects trust.

The Quiet Shift in Mindset

Every serious IoT builder experiences a shift.

Early stages focus on features, visuals, and demonstrations. Over time, attention moves toward stability, predictability, and resilience.

The most valuable systems are often the least exciting to talk about.

They run quietly.

They recover gracefully.

They earn trust through consistency.

In operational IoT, silence is not a problem. Unexpected silence is.

Why This Matters for the Industry

As IoT systems move deeper into buildings, cities, infrastructure, and essential services, reliability becomes non-negotiable.

Data gaps affect decisions.

Decisions affect people.

People lose confidence when systems behave unpredictably.

The industry must talk more openly about operations, not just innovation. About maintenance, not just deployment. About responsibility, not just capability.

A Call to Action

If you are a student, treat troubleshooting as a skill, not an inconvenience. It will define your value long after graduation.

If you are a developer or system integrator, design for failure, not perfection. Ask what can go silent and how you will notice.

If you are an organisation deploying IoT, invest in platforms, processes, and teams that understand operations end-to-end.

IoT does not fail because it is complex. It fails when continuity is treated as an afterthought.

The dashboard going quiet is not the end of the story.

It is the moment the real work begins.

If this resonates with your experience, share your perspective.

What caused the first silence in your system, and what did it teach you?

Join the conversation.

Leave a Reply